Brain Rot Through AI - Or Superintelligence. The Choice Is Always Yours.

Why the 'AI makes us dumb' discourse can't see what it's missing - and why that blindspot is the actual problem

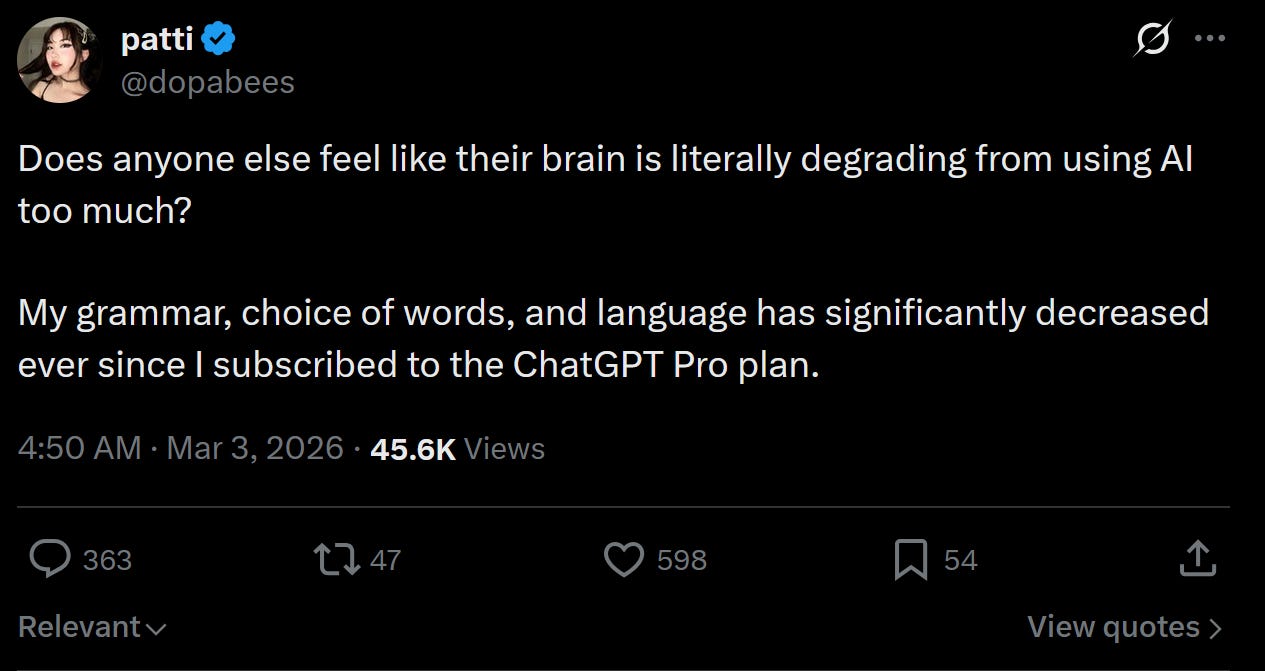

A recent post on X gained considerable traction. User @dopabees described experiencing cognitive decline since subscribing to ChatGPT Pro - deteriorating grammar, difficulty reading paragraphs aloud, an inability to enjoy strategy games that previously engaged her, and a growing sense that her own writing had become infantile compared to GPT output. Tens of thousands of views, widespread resonance. The implicit thesis: AI degrades cognition.

The concern is not new and not without substance. But the framing reveals more about the current discourse than about the actual mechanism at work.

The Architecture of the Problem

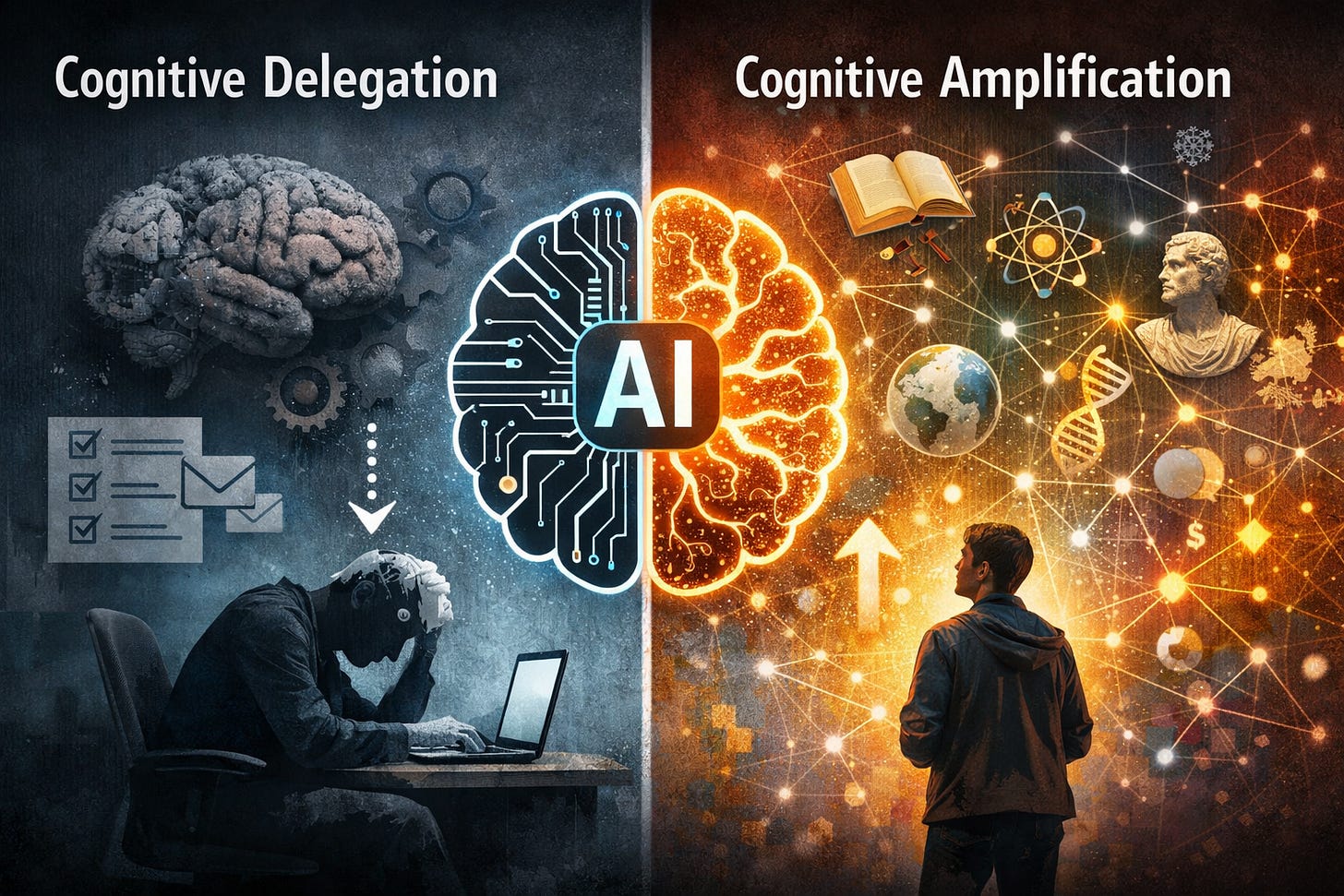

We need to distinguish between two structurally different operations that currently travel under the same label of “using AI.”

The first is cognitive delegation. You hand the system a task that your own neural architecture would otherwise have processed - formulation, decision-making, conceptual organization - and you receive a finished product. The brain’s role reduces to evaluation of output rather than generation of output. Over time, and this is neither controversial nor surprising, the generative capacity atrophies. Neural pathways that aren’t activated degrade. This is the mechanism @dopabees likely describes, and there is no reason to doubt that it’s real.

The second operation has no established name in the public discourse, which is itself revealing. We’ll call it cognitive amplification: using AI not to replace thought but to extend its reach into territory that would otherwise remain inaccessible - not due to lack of intelligence, but due to lack of exposure, vocabulary, or interdisciplinary range.

The distinction between these two operations is not gradual. It is categorical. And the entire “AI makes us dumb” discourse collapses the second into the first, rendering it invisible.

What Cognitive Amplification Actually Looks Like

Consider the following prompt, which we offer as an example of the second mode:

“To what extent is modern rule today largely exercised through an enormous amount of invisible double binds in all areas of life, which ‘keeps large parts of the population in check’ through the cannibalization of enormous psychological energy?”

Notice the structure. This is not a delegation. The question itself already presupposes a conceptual framework - Bateson’s double bind theory, elements of Foucault’s biopower, echoes of Byung-Chul Han’s psychopolitics - and it asks the system not to produce a result but to open a problem space. The cognitive work doesn’t end when the AI responds. It begins.

What a capable AI returns to a prompt like this is not an answer but a cartography: connections between disciplinary frameworks that would normally require years of institutional access to assemble. The user then has to evaluate, contest, extend, discard. The AI provides the raw material for synthesis; the synthesis itself remains a human operation.

Before AI, entering this kind of interdisciplinary conceptual space required either significant academic training or the biographical accident of knowing the right interlocutors. The vocabulary alone - double binds, repressive desublimation, psychopolitics - functions as a gatekeeping mechanism, not because the ideas are inherently inaccessible, but because the pathways to them are institutionally restricted. AI doesn’t remove the difficulty of thinking at this level. It removes the access barrier to thinking at this level. The distinction matters.

The Framing Problem

The dominant discourse around AI and cognition operates almost exclusively on the axis of productivity. AI as writing tool, code assistant, summarizer. The question is always: what does AI do for you?

This framing is not neutral. It’s the framing that sells subscriptions, and it is also the framing that produces the cognitive atrophy people are now noticing - because productivity tools are, by structural definition, tools of delegation. They remove friction, and friction is precisely what cognitive development requires.

But there is a second axis - epistemic expansion - that is almost entirely absent from the conversation. Not “what does AI do for you” but “what does AI enable you to think that you couldn’t think before?” The question about invisible double binds is an instance of this second axis. It doesn’t save time. It doesn’t increase output. It opens a problem space that the user then has to inhabit with their own cognitive resources.

The fact that these two axes coexist on the same platforms, using the same technology, and produce diametrically opposite cognitive outcomes is not a paradox. It’s a sorting mechanism. The technology amplifies whatever orientation the user brings to it. Delegation produces atrophy. Amplification produces expansion. The tool is indifferent.

What This Implies

We want to be careful here not to reproduce the moralizing structure we’re criticizing. The point is not that delegation is “bad” and amplification is “good” - there are perfectly legitimate uses for cognitive delegation, and no one needs to feel guilty about asking AI to draft an email.

The point is structural: the “AI makes us dumb” narrative locates agency entirely in the technology and removes it from the user. This is the same move as “television makes us passive” or “social media makes us depressed” - it produces a clean causal story with a clear villain, which is rhetorically effective and analytically wrong. The technology is a variable, but it is not the determining variable. The determining variable is the orientation of use, which is itself a function of what the user wants from their own cognition.

This is where the analysis becomes uncomfortable, because it reintroduces something the contemporary discourse would prefer to keep off the table: the role of individual intellectual disposition. Not everyone uses the same tool the same way, and the divergence in outcomes is not random - it correlates with pre-existing cognitive habits, curiosity structures, and tolerance for conceptual difficulty.

AI doesn’t create this divergence. It accelerates it. And the acceleration is producing a gap between modes of cognitive engagement that is widening faster than any previous technology made possible.

The Irony

There is a structural irony worth noting. The very question we cited - about invisible double binds that cannibalize psychological energy - is itself an instance of the phenomenon it describes. The framing of AI as purely a productivity tool, the reduction of a categorically ambiguous technology to a single axis of “does it help or does it harm,” the inability of the discourse to even name the second mode of use - these are themselves double binds. They constrain the range of permissible thought about the technology while appearing to enable free discussion of it.

The person who only encounters AI through the productivity lens is not being lied to. They are being given a framework that is internally coherent but radically incomplete - and the incompleteness is invisible from within the framework itself.

Which is, incidentally, a fairly precise definition of how double binds operate.